AI Inference at the edge runs models close to data sources to reduce latency, preserve privacy, and optimize resource usage.

AI Inference architecture diagram

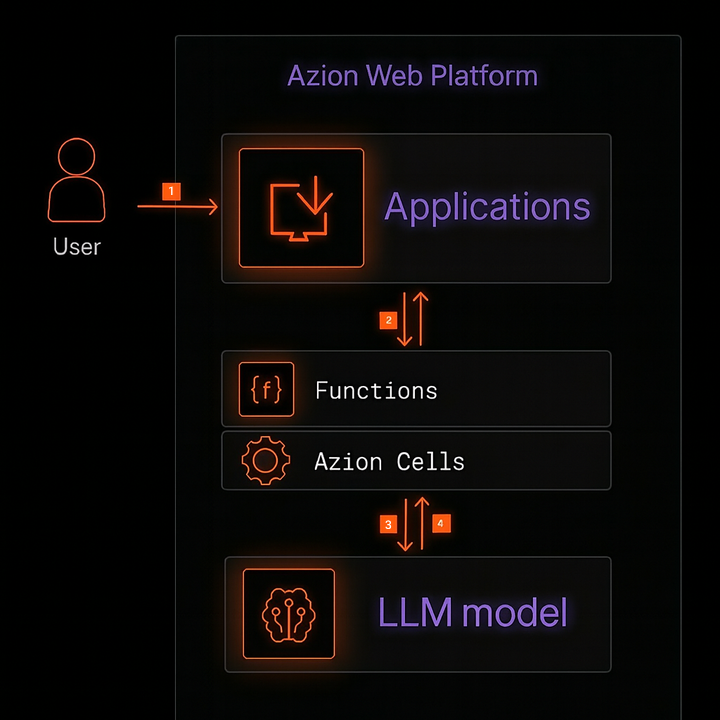

The following figure illustrates a reference architecture for AI inference at the edge.

Data flow

- The user sends a request to the application domain.

- The nearest edge node receives the request and forwards it to the application which applies policies and calls the function.

- The function orchestrates the inference: preprocesses input, and calls the model via

Azion.AI.runthrough the Azion Cells API. - The agent processes the user’s input and sends it back to the user through the same path.

Components

- Applications: application layer on Azion’s distributed network.

- Functions: executes inference logic, orchestrates RAG, and integrates external services.

- AI Inference (Edge Runtime): runs AI models directly at the edge.

- Global Infrastructure: Azion’s distributed global network.

- Orchestrator: manages requests through the Edge Node for Cells execution.

Note: Azion Cells is an advanced feature that is part of the Orchestrator. To activate it, you need to contact Azion’s sales team to verify requirements and enable the product in your account.

Implementation

Use the AI Inference Starter Kit template to accelerate your journey and have a ready-to-use application with OpenAI-compatible APIs running at the edge.

- Access the Azion Console.

- Click the

+ Createbutton to open the templates page. - Select the

AI Inference Starter Kittemplate. - On the template page, enter a name for your application and click

Deploy. - Wait for the application to be available at the domain

your-url.map.azionedge.net(or your custom domain). - Interact with the model via your application’s OpenAI-compatible endpoint.

Example of interaction with the nanonets/Nanonets-OCR-s model, which is an instruction-oriented OCR model that extracts text from images/documents:

curl -X POST https://YOUR-DOMAIN.map.azionedge.net/v1/chat/completions \ -H "Content-Type: application/json" \ -d '{ "model": "nanonets/Nanonets-OCR-s", "max_tokens": 500, "messages": [ { "role": "user", "content": [ { "type": "image_url", "image_url": { "url": "https://www.any-url-example.com/example.jpg" } }, { "type": "text", "text": "Extract the text from the document above in structured Markdown. Tables in HTML. Equations in LaTeX. Use <watermark>...</watermark> for watermarks and <page_number>...</page_number> for pagination." } ] } ] }'Notes:

- Access the Azion AI Inference Models page to see more available models.

- If you added authentication to your API, include the appropriate header (for example,

-H "Authorization: Bearer <TOKEN>").

Example of a function that executes Azion.AI.run inside a Function:

async function handleRequest(request) { const url = new URL(request.url); if (url.pathname !== "/ocr") return new Response("Not found", { status: 404 });

const modelResponse = await Azion.AI.run("nanonets/Nanonets-OCR-s", { stream: false, max_tokens: 500, messages: [ { role: "user", content: [ { type: "image_url", image_url: { url: "https://www.any-url-example.com/example.jpg" } }, { type: "text", text: "Extract the text from the document above in structured Markdown. " + "Tables in HTML. Equations in LaTeX. Use <watermark>...</watermark> " + "for watermarks and <page_number>...</page_number> for pagination." } ] } ] });

return new Response(JSON.stringify(modelResponse), { headers: { "Content-Type": "application/json" } });}

addEventListener("fetch", (event) => { event.respondWith(handleRequest(event.request));});Observability

- Real-Time Metrics: analyze events and gain insights into your applications’ performance.

- Real-Time Events: track requests, raw data, logs, and complex queries.