AI Inference

Preview

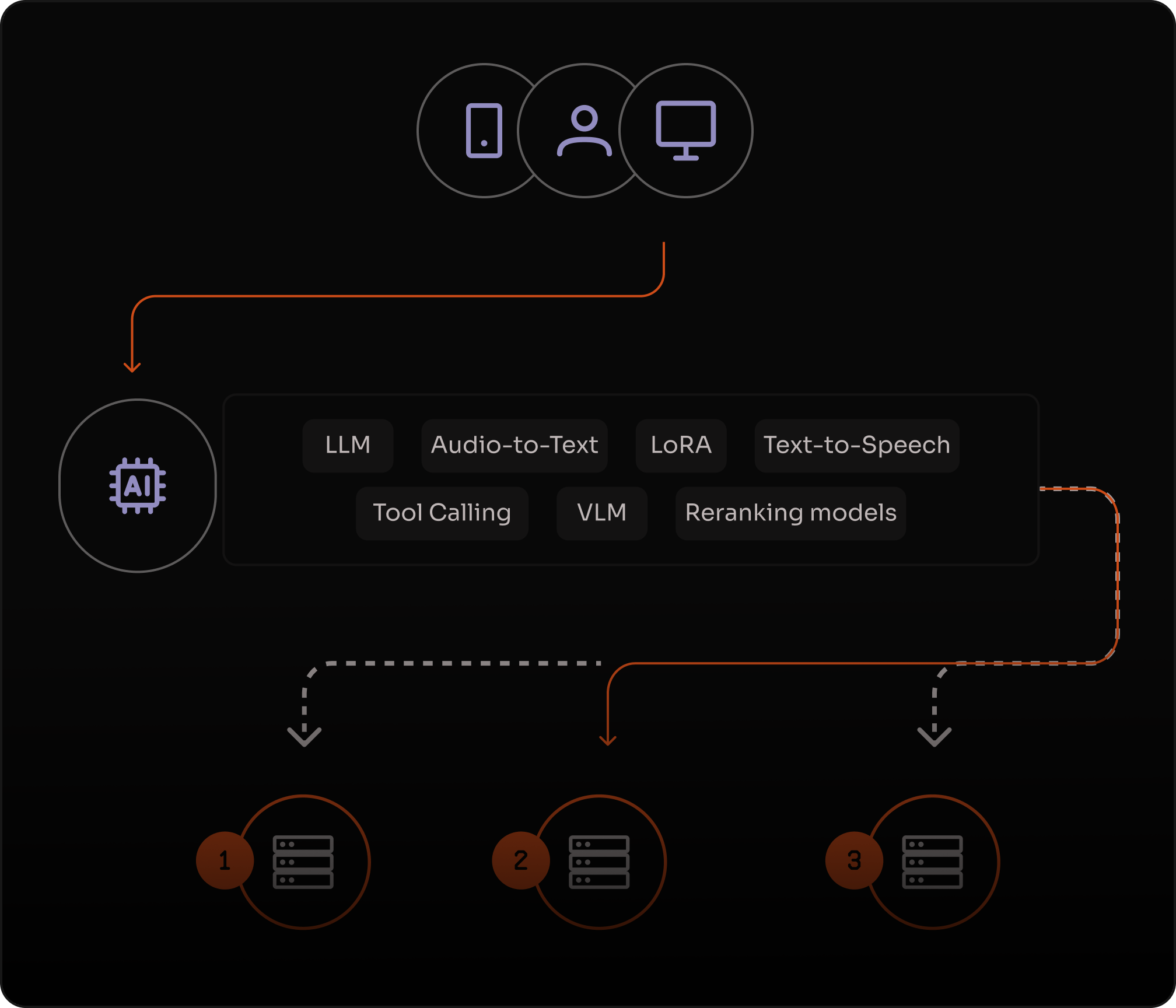

Deploy and run AI inference for LLMs, VLMs, and multimodal models with low latency, using an OpenAI-compatible API, distributed infrastructure, and no GPU clusters to manage.

faster inference

% lower compute costs

% lower latency

Low-latency inference for real-time user experiences

Distributed execution keeps responses fast, with low time-to-first-token and lower end-to-end latency.

Serverless scaling without GPU operations

Handle spiky demand without provisioning GPU clusters. Scale automatically from first request to peak load, while keeping costs aligned with usage.

Reliable by design for production workloads

Automatic failover keeps mission-critical inference available, even during traffic spikes or regional failures.

"With Azion, we’ve been able to scale our proprietary AI models without worrying about infrastructure. These solutions inspect millions of websites daily, detect and neutralize threats with speed and precision, and execute the fastest automatic takedown in the market."

Fabio Ramos

CEO

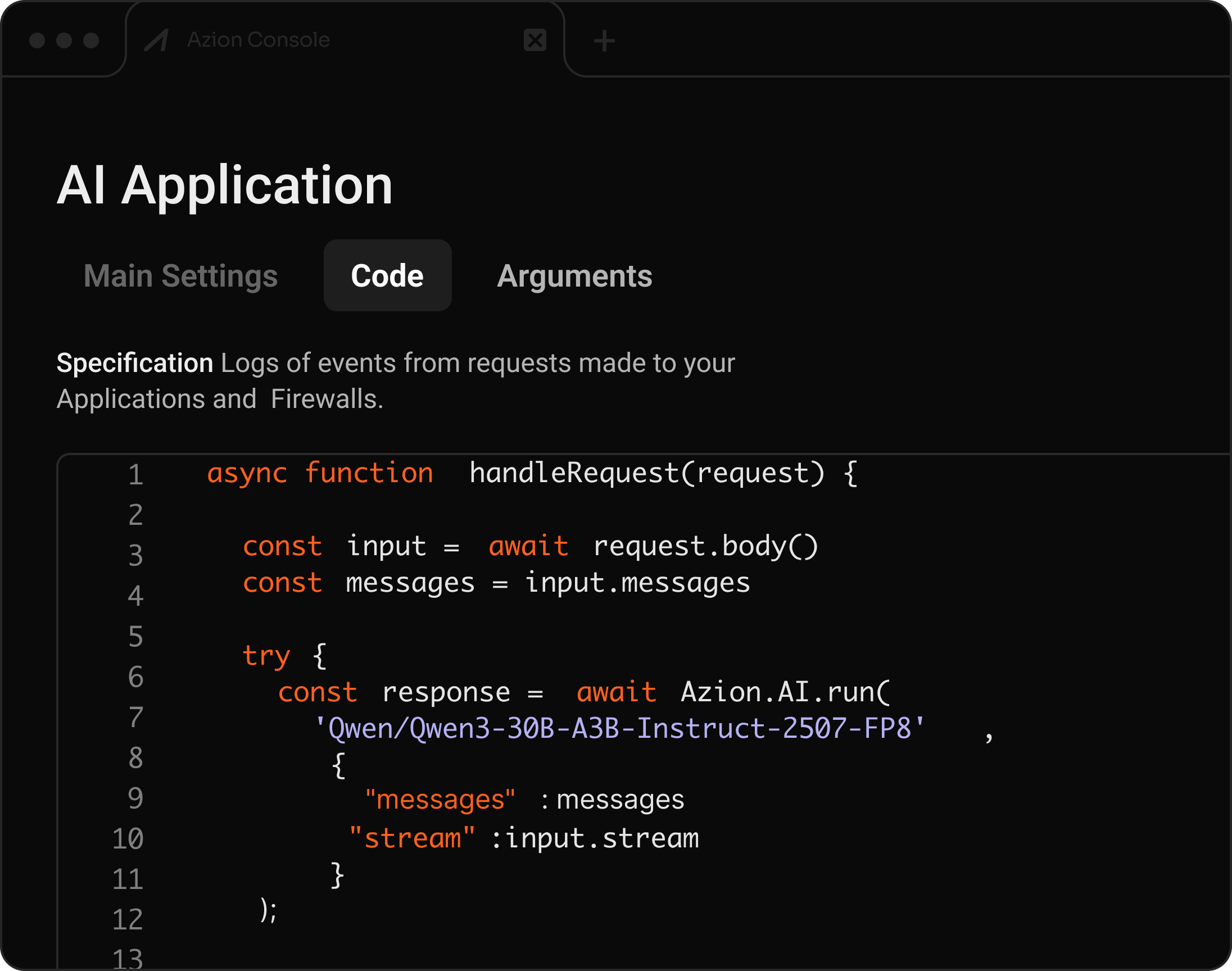

Build, customize, and serve AI models in production

Deploy and run LLMs, VLMs, embeddings, and multimodal models, integrated into distributed applications with automatic scaling.

LLMs & VLMs Functions integration OpenAI-compatible Auto-scaling

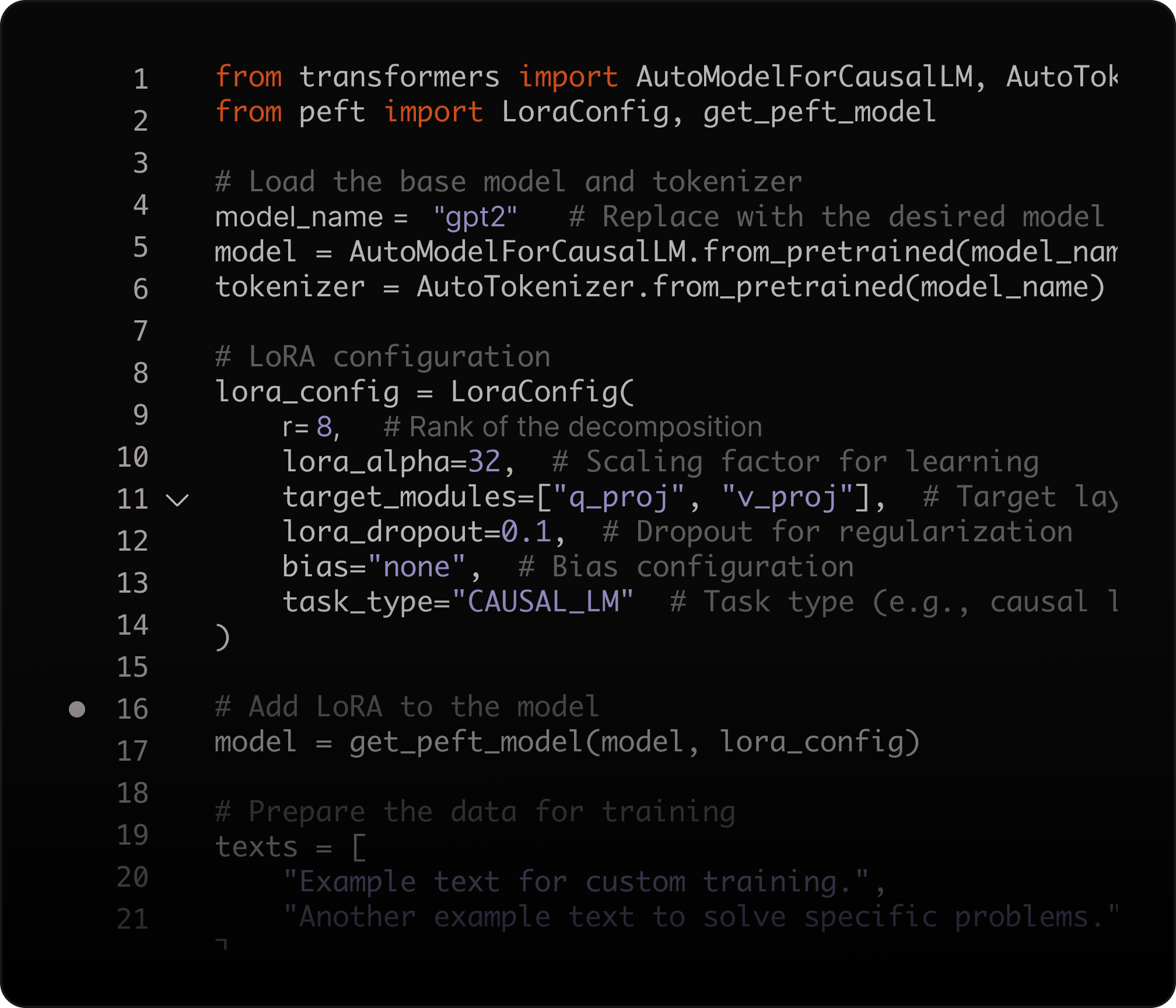

Fine-tune with LoRA for domain-specific performance

Adapt model outputs to your domain using Low-Rank Adaptation (LoRA), improving accuracy while reducing compute costs.

LoRA fine-tuning Domain customization No full retraining Lower compute costs

What you can build with AI Inference

Frequently Asked Questions

What is Azion AI Inference?

Azion AI Inference is a serverless platform for deploying and running AI models globally. Key features include: OpenAI-compatible API for easy migration, support for LLMs, VLMs, embeddings, and reranking, automatic scaling without GPU management, and low-latency distributed execution. Create production endpoints and integrate them into Applications and Functions.

Which models can I run?

You can choose from a catalog of open-source models available in AI Inference. The catalog includes different model types for common workloads (text and code generation, vision-language, embeddings, and reranking) and evolves as new models become available.

Is it compatible with the OpenAI API?

Yes. AI Inference supports an OpenAI-compatible API format, so you can keep your client SDKs and integration patterns and migrate by updating the base URL and credentials. See the product documentation: https://www.azion.com/en/documentation/products/ai/ai-inference/

Can I fine-tune models?

Yes. AI Inference supports model customization with Low-Rank Adaptation (LoRA), so you can specialize open-source models for your domain without full retraining. Starter guide: https://www.azion.com/en/documentation/products/guides/ai-inference-starter-kit/

How do I build RAG and semantic search?

Use AI Inference with SQL Database Vector Search to store embeddings and retrieve relevant context for Retrieval-Augmented Generation (RAG). This enables semantic search and hybrid search patterns without additional infrastructure.

Can I build AI agents and tool-calling workflows?

Yes. AI Inference can be used to power agent patterns (for example, ReAct) and tool-calling workflows when combined with Applications, Functions, and external tools. Azion also provides templates and guides for LangChain/LangGraph-based agents.

How do I deploy AI inference into my application?

Create an AI Inference endpoint and integrate it into your request flow using Applications and Functions. This lets you add AI capabilities to existing APIs and user experiences with distributed execution and managed scaling.

Access to all features.